Decentralized systems must handle failures differently than centralized ones. Without a central authority, these networks face issues like partial node failures, data loss, malicious behavior (Byzantine faults), and error propagation. For example, even small errors can ripple through a system, leading to widespread disruptions. Blockchain networks, like Solana, have experienced multiple outages, highlighting the need for fault-tolerant designs.

Key challenges and solutions include:

- Node Failures & Data Loss: Crashes from hardware or software issues can lead to data loss. Techniques like replication and erasure coding help safeguard data.

- Byzantine Faults: Malicious nodes can mislead the system. Consensus algorithms like PBFT and Tendermint mitigate this but come with high communication costs.

- Error Propagation: Small glitches can spread across nodes, affecting performance. Strong isolation and recovery mechanisms are critical.

Solutions:

- Redundancy: Strategies like erasure coding reduce storage overhead while maintaining data reliability.

- Consensus Mechanisms: Raft and Tendermint help achieve agreement despite failures or malicious activity.

- Testing & Recovery: Fault injection testing and persistent state recovery help systems bounce back after failures.

- Advanced Cryptography: Threshold cryptography and erasure coding strengthen both consensus and data availability.

Emerging tools like cryptographic techniques and self-healing protocols are pushing decentralized systems toward greater reliability. However, balancing scalability, cost, and fault tolerance remains a challenge.

Main Challenges in Achieving Fault Tolerance

Node Failures and Data Loss

In decentralized systems, node crashes can wreak havoc, leaving behind a trail of issues. Hardware problems like disk controller failures, power outages, and NIC buffer exhaustion are common culprits. On the software side, bugs can lead to out-of-memory errors, unhandled exceptions, or memory corruption. Then there are network issues – packet loss, asymmetric routing, BGP route leaks, and misconfigured firewalls – all of which can add to the chaos. Timing problems, such as delays caused by garbage collection or clock synchronization drift, can even make healthy nodes seem like they’ve failed.

But the real danger lies in data loss. Without proper replication, a single hardware failure could permanently erase critical data. For instance, soft errors in commodity DRAM occur at an estimated rate of about 1 per 1 GB per month, highlighting how fragile data integrity can be [2].

Byzantine Faults and Inconsistent States

Byzantine faults are a whole different beast. Unlike standard hardware or software failures, these faults involve unpredictable or even malicious behavior. A Byzantine node doesn’t just crash – it might actively mislead the system. For example, it could send conflicting data to different observers during the same protocol round, a phenomenon known as equivocation [6]. Other possible actions include generating fake data, forging acknowledgments, or replaying old messages to disrupt failure detection systems [6].

"Byzantine failures imply no restrictions on what errors can be created, which means that a failed node can generate arbitrary data, including data that makes it appear like a functioning node to a subset of other nodes." – Wikipedia [6]

Dealing with Byzantine faults is no small task. For a system to tolerate f Byzantine failures, it needs at least 3f + 1 nodes, far more than the 2f + 1 nodes required for handling simple crashes [2] [7]. In a cluster of 100 nodes, a Byzantine Fault Tolerance protocol could result in roughly 9,900 pairwise message exchanges per round [2] [4]. This quadratic communication complexity (O(n²)) makes scaling Byzantine fault-tolerant systems a costly endeavor.

Error Propagation in Large-Scale Systems

In large-scale systems, small errors can spiral out of control. A single corrupted message can ripple through interconnected nodes, causing failures in dependent services. Without proper isolation, resource saturation in one part of the system can spread like wildfire. Even a single slow node can create backpressure, dragging down the performance of an entire cluster.

Byzantine faults make detection even harder. These faults can mimic normal network behavior, with nodes appearing reliable to some peers while failing others. This makes them nearly impossible to distinguish from simple network latency without rigorous cross-checking [6] [2]. By the time the issue is identified, the error may have already spread across multiple layers, requiring coordinated recovery efforts across many nodes. These challenges underscore the need for strong redundancy and recovery mechanisms, which will be explored in the next section.

sbb-itb-c5fef17

Byzantine Fault Tolerance: Tolerating Bad Actors in Distributed Systems

Solutions for Fault Tolerance

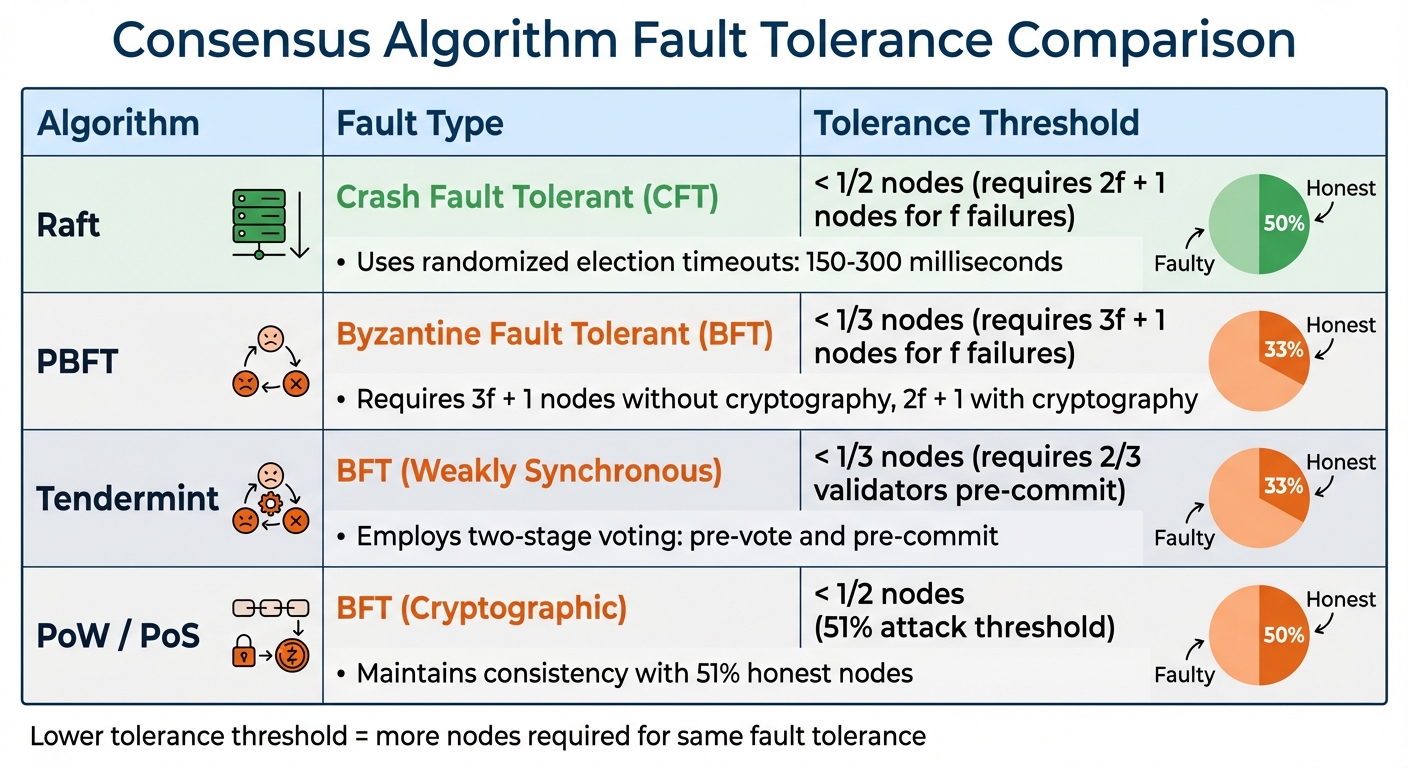

Consensus Algorithm Fault Tolerance Comparison: Raft vs PBFT vs Tendermint

Redundancy and Replication Strategies

To avoid data loss, replicating data across nodes is key. Different redundancy strategies come with varying levels of cost and reliability. Full replication, where every node stores an identical copy of the data, ensures high availability but demands significant storage. For example, achieving reliability as high as twelve nines (a loss probability of less than 10⁻¹²) can require a 25x storage overhead [13].

A more efficient approach is erasure coding, which divides data into K data chunks and M parity chunks. This method allows data reconstruction using any K pieces [9]. In the Swarm decentralized storage network, data is broken into 4KB chunks, each identified by a unique hash. These chunks are distributed across nodes based on their proximity in the network’s address space [5]. In March 2025, the Swarm Foundation introduced Bee Track 2.5, an updated version of the "push-sync" protocol. This update improved chunk distribution and incorporated the BZZ token to incentivize nodes for maintaining redundant storage [5].

Other systems, like Walrus, take replication efficiency further with two-dimensional erasure coding. Its "Red Stuff" protocol achieves high security with a 4.5x replication factor and supports self-healing recovery, where the bandwidth required scales directly with the amount of lost data instead of needing full reconstruction [13]. Additionally, in January 2026, researchers at the China University of Petroleum introduced a Dynamic Heterogeneous Redundancy (DHR) architecture. This system uses five heterogeneous nodes, each running different operating systems and library versions, to minimize the risk of a single exploit compromising the entire system [8].

While redundancy protects data, consensus algorithms ensure system-wide agreement, even under failure conditions.

Consensus Algorithms for Fault Mitigation

Consensus mechanisms help nodes agree on the system’s state, even when some nodes fail or act maliciously. Crash Fault Tolerant (CFT) algorithms like Raft address simple node failures by requiring a majority (2f + 1 nodes for f failures) to reach consensus. Raft leverages randomized election timeouts, typically set between 150–300 milliseconds, to avoid split votes and recover quickly after a leader failure [3].

For more complex scenarios involving malicious nodes, Byzantine Fault Tolerant (BFT) algorithms like PBFT and Tendermint step in. Without cryptographic techniques, these require 3f + 1 nodes to tolerate f Byzantine faults, but with cryptography, only 2f + 1 nodes are needed. This means the system remains consistent as long as 51% of nodes behave honestly [3]. Tendermint, for instance, employs a two-stage voting process – pre-vote and pre-commit – ensuring a block is committed only when over two-thirds of validators pre-commit in the same round [3].

| Algorithm | Fault Type | Tolerance Threshold |

|---|---|---|

| Raft | Crash Fault Tolerant | < 1/2 nodes |

| PBFT | Byzantine Fault Tolerant | < 1/3 nodes |

| Tendermint | BFT (Weakly Synchronous) | < 1/3 nodes |

| PoW / PoS | BFT (Cryptographic) | < 1/2 nodes (51%) |

In 2025, Solana’s Anza Research Division proposed the Alpenglow consensus upgrade to replace Proof of History and Tower BFT. This system introduced Votor (a dual-path voting mechanism) and Rotor (a single-hop relay system), achieving 100-150 millisecond finality while reducing validator operational costs by 85-90% [10].

"Votor, the new voting mechanism, implements a dual-path consensus system where blocks can achieve finalization through either a fast path requiring 80% stake approval… or a slow path requiring 60%" – Vangelis from Sei Labs Research [10]

Testing and Recovery Mechanisms

Beyond redundancy and consensus, testing and recovery mechanisms are critical for ensuring resilience. One effective method is fault injection testing, which simulates failures by killing processes or dropping network packets to observe recovery behavior. For instance, TiKV‘s version 7.1 documentation illustrates this using a six-node cluster and the go-ycsb tool to load 1,000,000 records. By killing the leader process, the system demonstrates recovery through leader re-election, monitored via Grafana [11].

More advanced testing methods measure latency differences between normal and adverse conditions using sensitivity scores [1]. MicroRaft‘s HighLoadTest, for example, stresses a 3-node Raft group by delaying followers and overloading the leader’s buffer. This triggers a CannotReplicateException, forcing the system to reject new work rather than risk unsafe operation [12].

"Backpressure is a feature, not a bug. If the leader cannot safely keep up, it should reject new work instead of pretending throughput is infinite" – MicroRaft documentation [12]

For recovery, maintaining persistent state is essential. In Raft-based systems, nodes must be able to read their persisted state to safely rejoin after a restart [12]. Walrus, developed by Mysten Labs, addressed this with the "Red Stuff" protocol in June 2025. This protocol introduced a multi-stage epoch change process, enabling storage committees to transition seamlessly without disrupting read/write operations [13].

How Advanced Cryptography Improves Fault Tolerance

Threshold Cryptography for Consensus

Advanced cryptographic techniques play a key role in improving both consensus reliability and data availability. One such method is threshold cryptography, which replaces the traditional reliance on a single private key by distributing key fragments. Techniques like Shamir Secret Sharing allow a subset of nodes (a t-of-n system) to collaboratively produce a valid signature. This means any group of t nodes can work together to sign, while groups smaller than t cannot reconstruct the key.

This approach strengthens Byzantine fault tolerance by reducing the number of nodes required for consensus. For example, cryptographic methods can lower the requirement from 3t + 1 nodes to 2t + 1 nodes, enabling systems to tolerate up to 51% faults in asynchronous networks and 50% in synchronous networks. With active observation mechanisms, fault tolerance could theoretically reach as high as 99%.

Modern protocols like FROST (Flexible Round-Optimized Schnorr Threshold Signatures) take this further. FROST uses a two-round signing process that is both communication-efficient and compatible with blockchain systems. The process involves key steps such as nonce commitments, partial signatures, and their aggregation. To ensure maximum security, key shares should be stored across independent failure domains – this could mean using separate cloud accounts, IAM boundaries, or secrets managers to prevent correlated failures.

Additionally, systems can pair consensus security with data integrity through advanced erasure coding, further enhancing fault tolerance.

Erasure Coding for Data Availability

While threshold cryptography secures consensus, erasure coding ensures data remains accessible even in the face of failures. This technique works by splitting data into k data chunks and m parity chunks, allowing reconstruction from any k fragments. Unlike simple replication, erasure coding achieves similar fault tolerance with much less storage overhead – typically ranging between 1.2× and 1.5×.

For example:

- Backblaze B2 uses a 17+3 erasure coding setup, which can handle the failure of three servers while maintaining a storage overhead of just 1.18×.

- OVH Cloud employs an 8+4 scheme, tolerating four simultaneous failures with about 1.5× overhead.

In blockchain systems, Solana’s Turbine protocol demonstrates the power of erasure coding. Turbine splits data into small units called "shreds" and uses a 32:32 erasure set. Even with a 65% success rate for individual shreds, the protocol achieves over 99% block recovery probability.

Performance-wise, implementations like Intel ISA-L deliver impressive speeds – up to 800 MB/s for encoding and 400 MB/s for decoding. Similarly, the Hadoop Distributed File System (HDFS) uses a RS-6-3-1024k policy (six data units, three parity units, and a 1 MB stripe size). This reduces storage overhead by around 50% compared to traditional triple replication methods.

Erasure coding is particularly valuable for modular blockchains, where execution and data availability are separated. Its ability to enable efficient data recovery ensures these systems remain resilient, even under challenging conditions.

Conclusion

Key Takeaways

Fault tolerance is a cornerstone challenge in decentralized systems, especially within the blockchain industry. Issues like Byzantine faults, node failures, and error propagation demand solutions that strike a balance between security, efficiency, and scalability. Traditional consensus models often come with tough trade-offs – for instance, non-cryptographic consensus requires 3t + 1 nodes, while cryptographic models reduce this to 2t + 1 nodes [15][3].

Recent outages in blockchain networks have underscored the disparity between theoretical fault tolerance and real-world performance. Availability rates often fall short of the 99.9% reliability benchmark set by traditional cloud services [1]. These shortcomings highlight the need for practical advancements to close the gap.

Emerging cryptographic techniques show promise. For example, threshold cryptography combined with active observation mechanisms could potentially achieve fault tolerance levels as high as 99% [14]. Additionally, erasure coding offers a more storage-efficient approach to data availability, significantly reducing overhead compared to traditional replication methods [13].

Future Research and Development Directions

The future of fault tolerance lies in advancing innovative technologies and refining existing models. While theoretical Byzantine Fault Tolerance (BFT) can handle up to 33% of faulty nodes, practical implementations like Filecoin‘s Expected Consensus are limited to just 20% of the network’s total storage capacity [9]. Addressing this gap requires new approaches, such as storage-weighted consensus mechanisms. These models evaluate adversaries based on their resource contributions rather than node count, offering a more robust defense against Sybil attacks in open networks.

The integration of AI and Web3 technologies opens exciting possibilities for improving fault tolerance. Deep learning models already demonstrate accuracy rates of 60% to 96% for unstructured data and up to 99% for structured datasets in fault recovery scenarios [16]. As these systems evolve, they could enable automated fault detection and recovery, especially in complex situations where traditional redundancy methods fall short. Companies like Bestla VC (https://bestla.vc), which focus on the intersection of AI, Web3, and decentralized infrastructure, are exploring these advancements. By combining cutting-edge cryptography with machine learning, the blockchain ecosystem can move toward more resilient and reliable decentralized systems.

FAQs

How do I choose between replication and erasure coding?

When making a decision, consider data access patterns, storage costs, and network efficiency. If you’re dealing with frequently accessed (hot) data, replication is a practical choice. It’s fast and straightforward, though it does come with higher storage overhead. On the other hand, for infrequently accessed (cold) data, erasure coding is a more economical option. It minimizes storage requirements and provides flexible durability. However, keep in mind that it demands more in terms of I/O and computational resources during retrieval and repairs.

When should a system use Raft versus a BFT consensus like Tendermint?

When you’re prioritizing simplicity, efficiency, and strong consistency in a trusted environment – like distributed databases – Raft is the way to go. It’s designed to handle crash faults effectively and is relatively straightforward to implement.

On the other hand, if you’re working on systems that need to guard against malicious actors or Byzantine failures, such as blockchains or financial platforms, BFT algorithms like Tendermint are a better fit. These algorithms provide stronger security in adversarial conditions but come with added complexity and typically require more nodes to function effectively.

What’s the simplest way to test fault tolerance in a decentralized network?

The easiest way to check fault tolerance in a decentralized network is by simulating node failures or downtimes and seeing if the network keeps running smoothly. Take Distributed Validator Technology (DVT) as an example – this can be tested by deliberately causing node outages to ensure the system stays up and running. You can also use local testing tools to mimic failures in a controlled setting, allowing you to assess how the network deals with disruptions without needing to test in a live environment.